The Second Time Will Be The IPO Charm For Cerebras

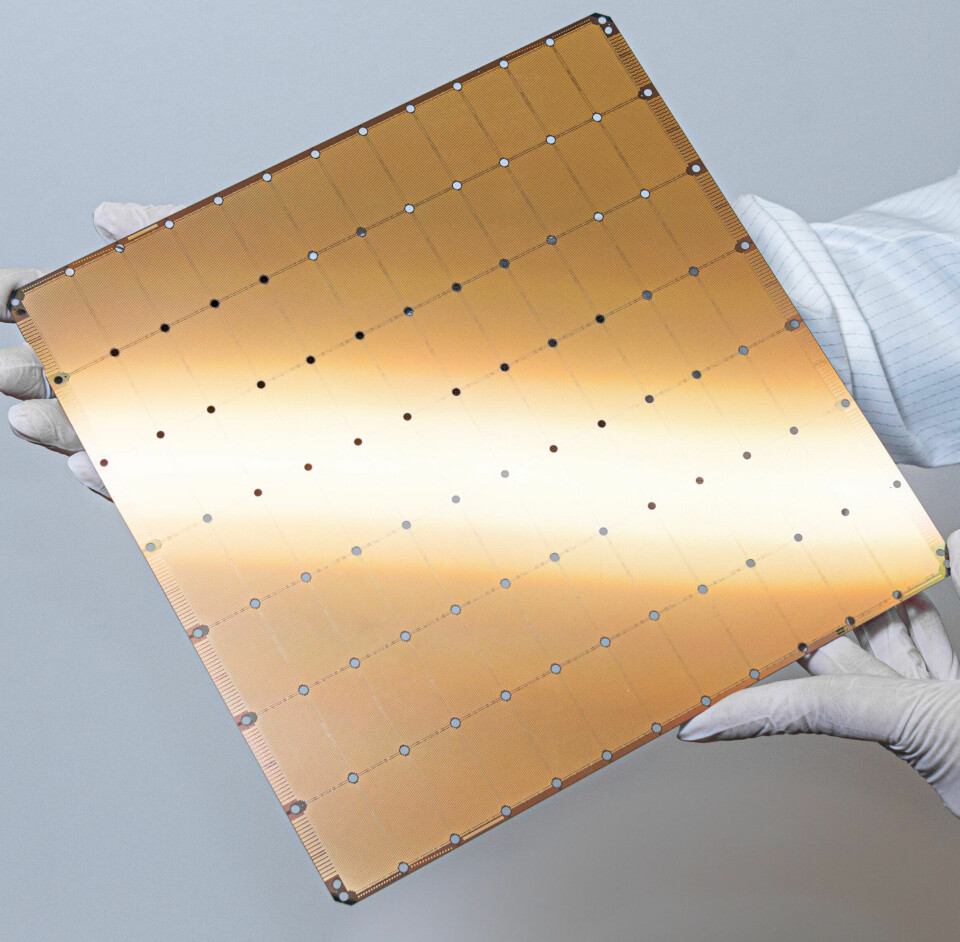

Waferscale

chip pioneer and AI systems maker Cerebras Systems filed

to go public back in September 2024 because it needed a Wall Street cash

infusion so it could expand its customer base. At the time, it had raised $720

million in five rounds of funding, and its Series F raise was three years in

the rear view mirror. With a valuation of $4 billion, it was time.

Particularly

because 85 percent of the company’s revenue in 2024 was being driven by one lighthouse

customer: Group 42, the Arabic AI model maker formed in 2018 in Abu Dhabi and

backed by the government of the United Arab Emirates. G42, as the company is

commonly known, inked

a deal with Cerebras in July 2023 to buy $300 million of hardware, software,

and services from Cerebras, and in May 2024 it upped the ante and said it

would buy another $1.43 billion in gear and also purchase 22.85 million shares

in Cerebras for $335 million within a year.

As

far as we can tell from the S-1 reports that Cerebras has filed

in September 2024 and then

again this week as it has a second go at going IPO, G42 has spent $434.5

million on Cerebras gear and support for its cloud buildout of a mix of CS-2

and CS-3 waferscale systems from 2023 through 2025, representing 49.4 percent

of revenues for Cerebras for those years. We have combed the two S-1 reports to

give you a composite picture of the financials:

The

biggest money maker for Cerebras in 2025 was not G42, however, but was a new

customer added last year: the Mohamed bin Zayed University of Artificial

Intelligence, which is a graduate-level research institution that was

established in April 2020 and that also located in Abu Dhabi. So these two

customers have pull from the same money bag and accounted for 86 percent of the

$510 million that Cerebras brought in last year.

But

some funny things happened on the way to that initial IPO attempt. First, code

assistants became the killer app for the GenAI era. And second, agentic AI – systems

talking to systems – started being a thing, and latency matters a whole lot

more than it does with chattybots with humans asking questions. Third, people

started figuring out that very expensive GPU clusters made by Nvidia were very

good at batching up inference work with reasonable response times for chattybots,

but some of those exotic machines created by Cerebras and rivals Groq and

SambaNova Systems and loaded up with SRAM cache and not so dependent on HBM

stacked memory, had a memory bandwidth-to-compute ratio advantage that allowed

for very low latency at low levels of interactivity. (Meaning small or no

batches, but real-time and often single user.)

And

so, private equity companies started lining up to give Cerebras big bags of

money and the company quietly pulled the plug on the initial IPO attempt. The

company’s Series G funding round came in at $1.1 billion in October 2025,

pushing its valuation up to $8.1 billion, and another $1 billion in Series H

funding came in this year in February, driving the company’s valuation to $23

billion.

As

these funding rounds were happening, model maker OpenAI and cloud builder Amazon

Web Services inked deals to install Cerebras to drive their AI inference workloads.

While much has been made of the

transformative $10 billion deal that Cerebras inked with OpenAI back in January,

which includes a $1 billion working capital loan to help Cerebras scale up its

manufacturing operations. The latest S-1 says that this OpenAI deal has the potential

to scale to $20 billion over multiple years, but confirms that the initial install

is for 750 megawatts of CS systems to be installed through 2028 and options for

another 3 gigawatts of gear in 2029 and 2030. We believe that OpenAI will be mostly

installing CS-4 machines, based on a new WS-4 architecture that we expect to

come out later this year, but that is a hunch. OpenAI and Cerebras are also

doing some sort of co-development for future CS machinery, something that has

not been detailed at all.

In

March this year, Cerebras inked a “binding term sheet” with AWS to marry CS-3

systems to its homegrown and current Trainium 3 and as well as its future

Trainium 4 AI systems, which Cerebras characterized as a multi-year deal “to

bring fast inference to an even bigger scale through global distribution.” The

contrast in that sentence snippet above referred to the OpenAI deal. We do not

know if that deal has been fully negotiated and signed, but the latest S-1 says

the term sheet is “binding with respect to pricing, exclusivity, minimum

capacity, and certain other protections in favor of AWS.” The prospective deal

also includes a warrant for 2.7 million shares of Cerebras, and depending on

the valuation that Cerebras gets on Wall Street, could be worth a lot. The

vesting of this warrant is pegged to product purchases – something we have seen

from other AI infrastructure suppliers.

Of

these two deals, AWS could end up being more important in the long run than

OpenAI because AWS has both a lot of customers and a lot money it can pull from

other businesses to invest in expensive AI systems. OpenAI is ambitious and clever,

but is almost certainly losing as much money each quarter as it is generating

in revenues – if not more.

Here’s

the thing to contemplate as Cerebras files to go public again, and this time,

we think it will finish out.

Nvidia

“acquihired” most of Groq as last year came to an end for an enormous $20

billion to add Groq LPU motors to its inference stack, allowing for consistent low

latency that its GPU systems cannot deliver. Nvidia co-founder and chief

executive officer showed

precisely how much Nvidia needed Groq in his keynote at the GTC 2026

conference in mid-March. That keynote showed full well how breaking inference

into two pieces – the prefill part where context is provided and tokens of that

context are chewed on and analyzed and the decode part where the model

generates tokens as a response – results in better overall GenAI performance,

and perhaps overall better price/performance. Certainly better user experience,

whether that user is human or an AI agent.

With

Nvidia basically owning Groq, everybody else is hunting around for a fast GenAI

decode engine, and the wonder is why Intel has not already bought SambaNova and

why AMD has not already bought Cerebras. Arm/SoftBank might be able to create something

interesting for low latency inference from the

Graphcore acquisition SoftBank it did in July 2024 and sell it to system

makers, much as it is now doing with Arm server CPUs as evidenced by the

AGI CPU that Arm co-created with Meta Platforms and that it will be selling

to any and all buyers later this year.

For

now, Cerebras is content to have pocketed that $2.1 billion in equity and

expanded into a cloud builder, provided the deal gets done, and a dominant AI model

builder, which has to come up with the money to serve up tokens to its customers,

one way or another.

A

few thoughts to wrap this up.

The

cash and equivalents line from the latest S-1 does not report the full

liquidity of the company right now, or even at the end of December last year.

There was another $228.7 million in restricted cash at year end, and Cerebras

had another $406.5 million in marketable securities by the end of Q4 2025, too.

That is $1.34 billion in liquid assets. In January 2026, Cerebras got $1

billion net from the Series H round and the $1 billion in working capital from

OpenAI, too. So it has $3.34 billion of liquidity.

However,

that OpenAI buildout is expensive, and Cerebras has the money to start tackling

it. A few more billion dollars from Wall Street will certainly help. But doing the

IPO is also about expanding the company enough – and making it famous enough –

to attract other customers who may not buy gigawatts of capacity, but who will

add up to a proper customer pyramid if all goes well.

The

real pressure is that Cerebras has raised $2.55 billion in funding, and now all

of those investors want to make some money off that cash and the GenAI boom

before something turns. There may not be a better time for Cerebras to go

public than 2026, because even AI cannot predict what 2027 might look like in

this crazy world we all live in.