The Market Is Pricing AI Compute the Wrong Way

What’s in This Article

Introduction

Revisiting the Thesis

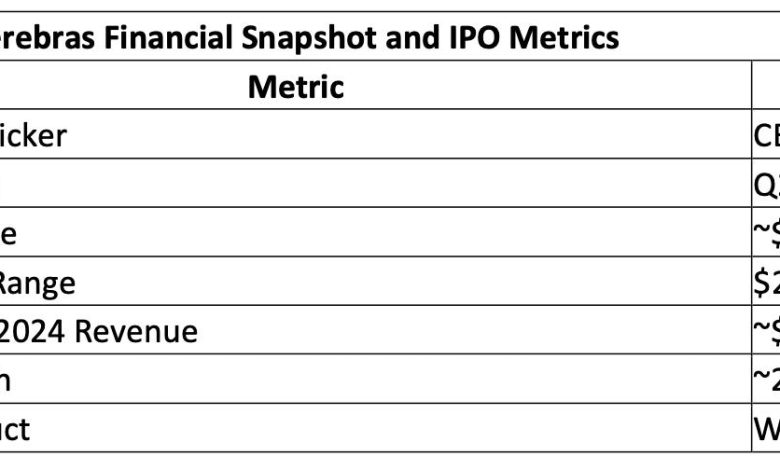

Table 1: Cerebras Financial Snapshot and IPO Metrics

Hyperscaler Adoption – OpenAI, AWS, and Distribution

Table 2: Customer Concentration Transition and Distribution Expansion

Inference Economics and the Breakdown of GPU Scaling

Table 3: Cost Structure – Cerebras vs Nvidia GPU Clusters

Groq and the Fragmentation of Inference

Table 4: Inference Architecture Segmentation

Implications for Nvidia, Broadcom, and Marvell

Table 5: AI Infrastructure Revenue Redistribution

Valuation: What the Market Is Actually Pricing

Table 6: Cerebras Valuation Sensitivity

Investor Takeaway

Cerebras is returning to the public markets at a $22–25 billion valuation, but this IPO is not about whether the company can build a wafer-scale processor. That has already been proven. The issue now is whether public markets are prepared to price a different economic model for AI compute at a time when hyperscalers are under pressure to reduce inference costs.

The prior IPO attempt in October 2025 failed not because of technology risk, but because of concentration and regulatory exposure tied to G42, an Abu Dhabi–based artificial intelligence and cloud company backed by sovereign capital. That combination limited institutional participation despite strong growth.

What has changed is demand validation. A multi-year agreement with OpenAI and emerging deployment pathways through hyperscalers—including Oracle (ORCL) and potential integration routes through Amazon Web Services (AWS)—shift Cerebras from a single-customer system vendor to a candidate for infrastructure-level adoption. The IPO therefore represents the first real attempt to price inference economics as a distinct segment of AI compute, separate from Nvidia’s (NVDA) training dominance.

My October 31, 2025 Substack article “Cerebras and the Disruption of AI Inference: From Wafer-Scale Engines to Third-Tier Innovators“ established that wafer-scale integration could disrupt AI inference by reducing latency, power, and system complexity. That argument was based on architecture. The IPO now tests whether that advantage is converting into hyperscaler adoption and revenue at scale.

Cerebras is entering the public markets with a valuation that reflects expected growth rather than current scale. At approximately $272 million in estimated 2024 revenue, the company is being priced as a forward infrastructure provider rather than a diversified semiconductor company. That distinction is important because it shifts the basis of valuation from current financial performance to projected adoption.

This creates a dependency on a small number of large contracts to justify valuation. Unlike Nvidia (NVDA), which built its revenue base across hyperscalers, enterprises, and developers over time, Cerebras is attempting to scale through a limited set of high-value deployments. The result is a narrow revenue funnel where a small number of customers determine growth outcomes.

The implication is that valuation is not tied to steady expansion, but to step-function increases in revenue tied to contract ramp. If those ramps occur as expected, the valuation compresses naturally as revenue grows. If they do not, there is no diversified base to absorb the shortfall, and the multiple resets quickly.

According to Table 1, the valuation range implies sustained hypergrowth relative to a small revenue base, making execution over the next several quarters the primary determinant of valuation stability.